Data-Engineer-Associate Practice Questions

AWS Certified Data Engineer - Associate (DEA-C01)

Last Update 4 days ago

Total Questions : 289

Dive into our fully updated and stable Data-Engineer-Associate practice test platform, featuring all the latest AWS Certified Data Engineer exam questions added this week. Our preparation tool is more than just a Amazon Web Services study aid; it's a strategic advantage.

Our free AWS Certified Data Engineer practice questions crafted to reflect the domains and difficulty of the actual exam. The detailed rationales explain the 'why' behind each answer, reinforcing key concepts about Data-Engineer-Associate. Use this test to pinpoint which areas you need to focus your study on.

A retail company is using an Amazon Redshift cluster to support real-time inventory management. The company has deployed an ML model on a real-time endpoint in Amazon SageMaker.

The company wants to make real-time inventory recommendations. The company also wants to make predictions about future inventory needs.

Which solutions will meet these requirements? (Select TWO.)

A company has as JSON file that contains personally identifiable information (PIT) data and non-PII data. The company needs to make the data available for querying and analysis. The non-PII data must be available to everyone in the company. The PII data must be available only to a limited group of employees. Which solution will meet these requirements with the LEAST operational overhead?

A company wants to migrate an application and an on-premises Apache Kafka server to AWS. The application processes incremental updates that an on-premises Oracle database sends to the Kafka server. The company wants to use the replatform migration strategy instead of the refactor strategy.

Which solution will meet these requirements with the LEAST management overhead?

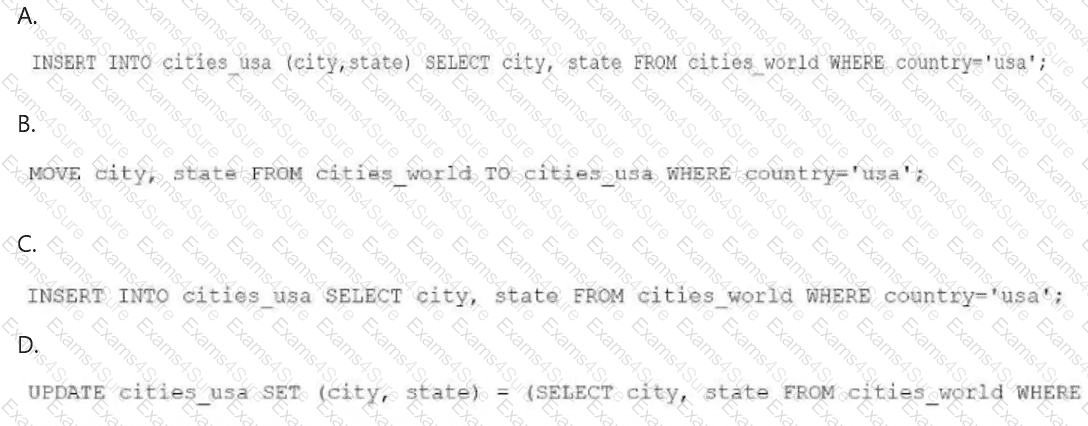

A data engineer needs to create an Amazon Athena table based on a subset of data from an existing Athena table named cities_world. The cities_world table contains cities that are located around the world. The data engineer must create a new table named cities_us to contain only the cities from cities_world that are located in the US.

Which SQL statement should the data engineer use to meet this requirement?

A retail company stores order information in an Amazon Aurora table named Orders. The company needs to create operational reports from the Orders table with minimal latency. The Orders table contains billions of rows, and over 100,000 transactions can occur each second.

A marketing team needs to join the Orders data with an Amazon Redshift table named Campaigns in the marketing team ' s data warehouse. The operational Aurora database must not be affected.

Which solution will meet these requirements with the LEAST operational effort?

A data engineer is using AWS Glue to build an extract, transform, and load (ETL) pipeline that processes streaming data from sensors. The pipeline sends the data to an Amazon S3 bucket in near real-time. The data engineer also needs to perform transformations and join the incoming data with metadata that is stored in an Amazon RDS for PostgreSQL database. The data engineer must write the results back to a second S3 bucket in Apache Parquet format.

Which solution will meet these requirements?