DP-700 Practice Questions

Implementing Data Engineering Solutions Using Microsoft Fabric

Last Update 11 hours ago

Total Questions : 113

Dive into our fully updated and stable DP-700 practice test platform, featuring all the latest Microsoft Certified: Fabric Data Engineer Associate exam questions added this week. Our preparation tool is more than just a Microsoft study aid; it's a strategic advantage.

Our free Microsoft Certified: Fabric Data Engineer Associate practice questions crafted to reflect the domains and difficulty of the actual exam. The detailed rationales explain the 'why' behind each answer, reinforcing key concepts about DP-700. Use this test to pinpoint which areas you need to focus your study on.

You need to recommend a solution for handling old files. The solution must meet the technical requirements. What should you include in the recommendation?

You need to schedule the population of the medallion layers to meet the technical requirements.

What should you do?

You need to ensure that usage of the data in the Amazon S3 bucket meets the technical requirements.

What should you do?

You need to populate the MAR1 data in the bronze layer.

Which two types of activities should you include in the pipeline? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

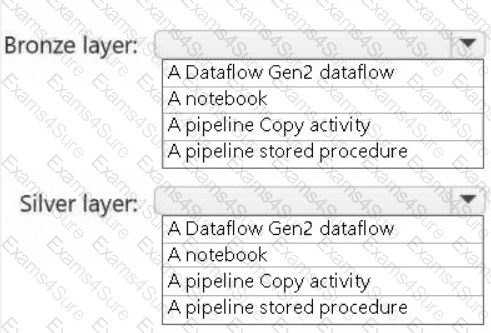

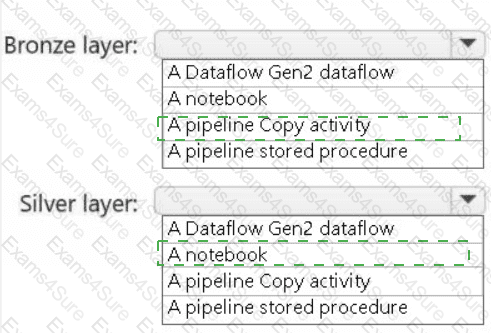

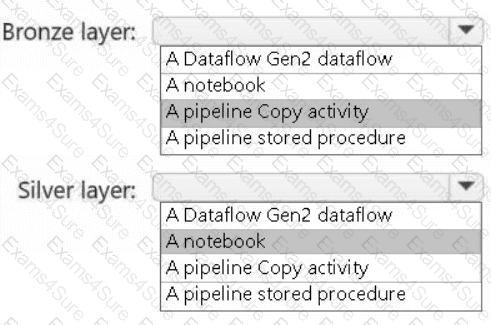

You need to recommend a method to populate the POS1 data to the lakehouse medallion layers.

What should you recommend for each layer? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

You need to recommend a solution to resolve the MAR1 connectivity issues. The solution must minimize development effort. What should you recommend?

You need to ensure that WorkspaceA can be configured for source control. Which two actions should you perform?

Each correct answer presents part of the solution. NOTE: Each correct selection is worth one point.

You need to ensure that the data analysts can access the gold layer lakehouse.

What should you do?

You have a Fabric notebook named Notebook1 that has been executing successfully for the last week.

During the last run, Notebook1executed nine jobs.

You need to view the jobs in a timeline chart.

What should you use?

You have a Fabric deployment pipeline that uses three workspaces named Dev, Test, and Prod.

You need to deploy an eventhouse as part of the deployment process.

What should you use to add the eventhouse to the deployment process?

A screenshot of a computer Description automatically generated

A screenshot of a computer Description automatically generated