MuleSoft-Integration-Architect-I Practice Questions

Salesforce Certified MuleSoft Platform Integration Architect (Mule-Arch-202)

Last Update 15 hours ago

Total Questions : 273

Dive into our fully updated and stable MuleSoft-Integration-Architect-I practice test platform, featuring all the latest Salesforce MuleSoft exam questions added this week. Our preparation tool is more than just a Salesforce study aid; it's a strategic advantage.

Our free Salesforce MuleSoft practice questions crafted to reflect the domains and difficulty of the actual exam. The detailed rationales explain the 'why' behind each answer, reinforcing key concepts about MuleSoft-Integration-Architect-I. Use this test to pinpoint which areas you need to focus your study on.

An organization has various integrations implemented as Mule applications. Some of these Mule applications are deployed to custom hosted Mule runtimes (on-premises) while others execute in the MuleSoft-hosted runtime plane (CloudHub). To perform the Integra functionality, these Mule applications connect to various backend systems, with multiple applications typically needing to access the backend systems.

How can the organization most effectively avoid creating duplicates in each Mule application of the credentials required to access the backend systems?

Which Exchange asset type represents configuration modules that extend the functionality of an API and enforce capabilities such as security?

An organization is evaluating using the CloudHub shared Load Balancer (SLB) vs creating a CloudHub dedicated load balancer (DLB). They are evaluating how this choice affects the various types of certificates used by CloudHub deplpoyed Mule applications, including MuleSoft-provided, customer-provided, or Mule application-provided certificates.

What type of restrictions exist on the types of certificates that can be exposed by the CloudHub Shared Load Balancer (SLB) to external web clients over the public internet?

An automation engineer needs to write scripts to automate the steps of the API lifecycle, including steps to create, publish, deploy and manage APIs and their implementations in Anypoint Platform.

What Anypoint Platform feature can be used to automate the execution of all these actions in scripts in the easiest way without needing to directly invoke the Anypoint Platform REST APIs?

An organization plans to extend its Mule APIs to the EU (Frankfurt) region.

Currently, all Mule applications are deployed to CloudHub 1.0 in the default North American region, from the North America control plane, following this naming convention: {API-name}—{environment} (for example, Orderssapi—dev, Orders-sapi-—qa, Orders-sapi-—prod, etc.).

There is no network restriction to block communications between APIs.

What strategy should be implemented in order to deploy the same Mule APIs to the CloudHub 1.0 EU region from the North America control plane,

as well as to minimize latency between APIs and target users and systems in Europe?

Mule application muleA deployed in cloudhub uses Object Store v2 to share data across instances. As a part of new requirement , application muleB which is deployed in same region wants to access this Object Store.

Which of the following option you would suggest which will have minimum latency in this scenario?

As a part of project , existing java implementation is being migrated to Mulesoft. Business is very tight on the budget and wish to complete the project in most economical way possible.

Canonical object model using java is already a part of existing implementation. Same object model is required by mule application for a business use case. What is the best way to achieve this?

As an enterprise architect, what are the two reasons for which you would use a canonical data model in the new integration project using Mulesoft Anypoint platform ( choose two answers )

A Mule application contains a Batch Job with two Batch Steps (Batch_Step_l and Batch_Step_2). A payload with 1000 records is received by the Batch Job.

How many threads are used by the Batch Job to process records, and how does each Batch Step process records within the Batch Job?

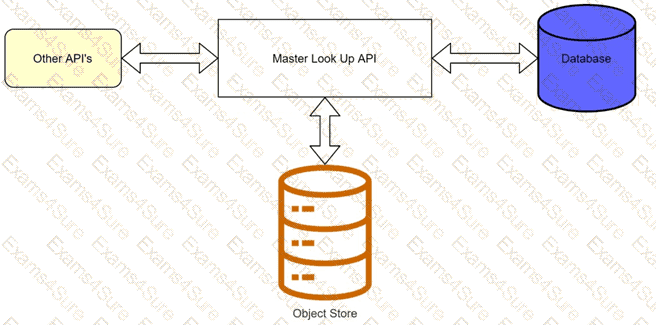

A banking company is developing a new set of APIs for its online business. One of the critical API's is a master lookup API which is a system API. This master lookup API uses persistent object store. This API will be used by all other APIs to provide master lookup data.

Master lookup API is deployed on two cloudhub workers of 0.1 vCore each because there is a lot of master data to be cached. Master lookup data is stored as a key value pair. The cache gets refreshed if they key is not found in the cache.

Doing performance testing it was observed that the Master lookup API has a higher response time due to database queries execution to fetch the master lookup data.

Due to this performance issue, go-live of the online business is on hold which could cause potential financial loss to Bank.

As an integration architect, which of the below option you would suggest to resolve performance issue?