MLA-C01 Practice Questions

AWS Certified Machine Learning Engineer - Associate

Last Update 4 days ago

Total Questions : 241

Dive into our fully updated and stable MLA-C01 practice test platform, featuring all the latest AWS Certified Associate exam questions added this week. Our preparation tool is more than just a Amazon Web Services study aid; it's a strategic advantage.

Our free AWS Certified Associate practice questions crafted to reflect the domains and difficulty of the actual exam. The detailed rationales explain the 'why' behind each answer, reinforcing key concepts about MLA-C01. Use this test to pinpoint which areas you need to focus your study on.

A streaming media company uses a churn risk model to assess the churn risk of its premium tier customers. Each month, the company runs an aggregation job on individual customers’ streaming data and uploads the user engagement features to an Amazon S3 bucket. The company manually re-trains the churn risk model with the user engagement data.

The current process requires manual intervention and is time-consuming. The company needs a solution that automatically re-trains the churn prediction model with the most recent data.

Which solution will meet these requirements with the SHORTEST delay?

A company has significantly increased the amount of data that is stored as .csv files in an Amazon S3 bucket. Data transformation scripts and queries are now taking much longer than they used to take.

An ML engineer must implement a solution to optimize the data for query performance.

Which solution will meet this requirement with the LEAST operational overhead?

A company uses a training job on Amazon SageMaker Al to train a neural network. The job first trains a model and then evaluates the model ' s performance ag

test dataset. The company uses the results from the evaluation phase to decide if the trained model will go to production.

The training phase takes too long. The company needs solutions that can shorten training time without decreasing the model ' s final performance.

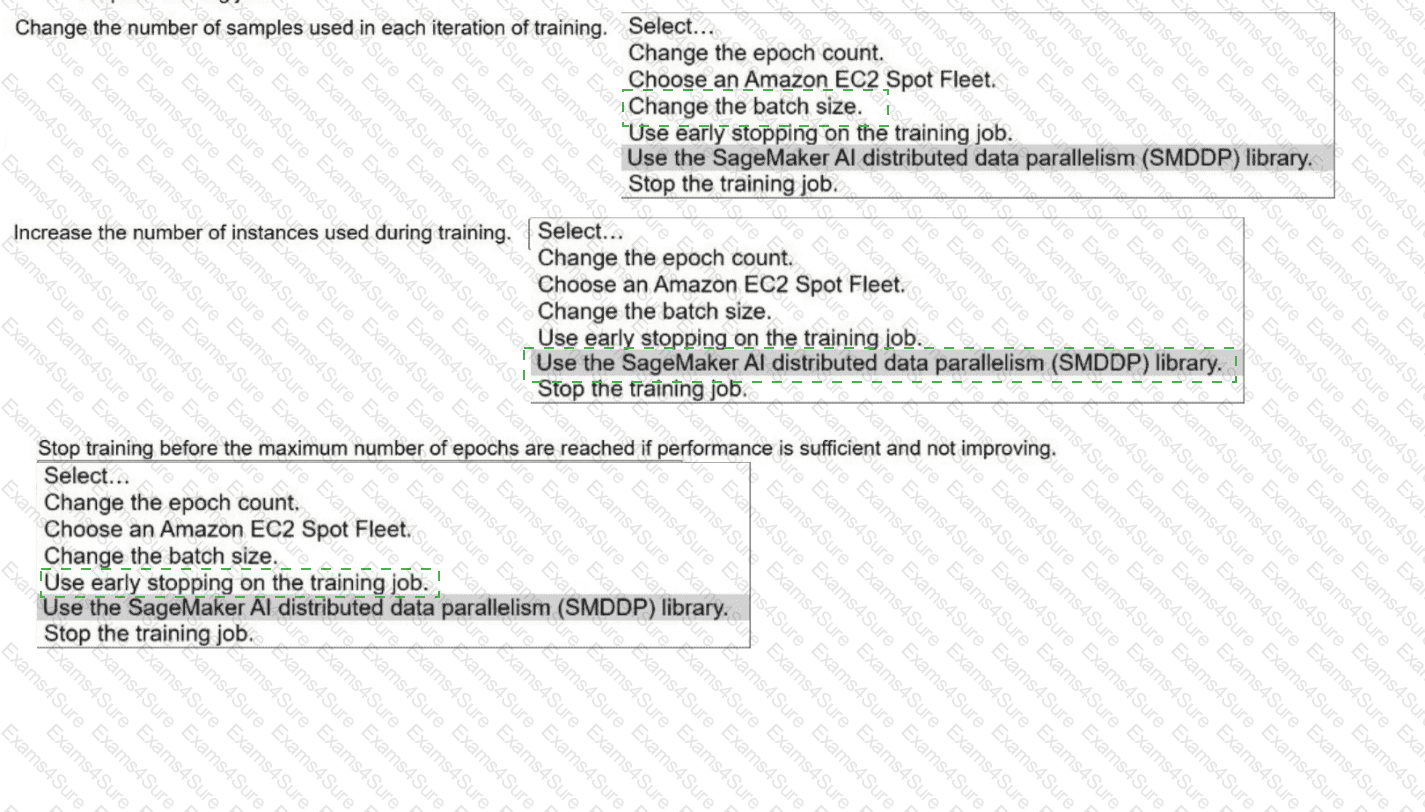

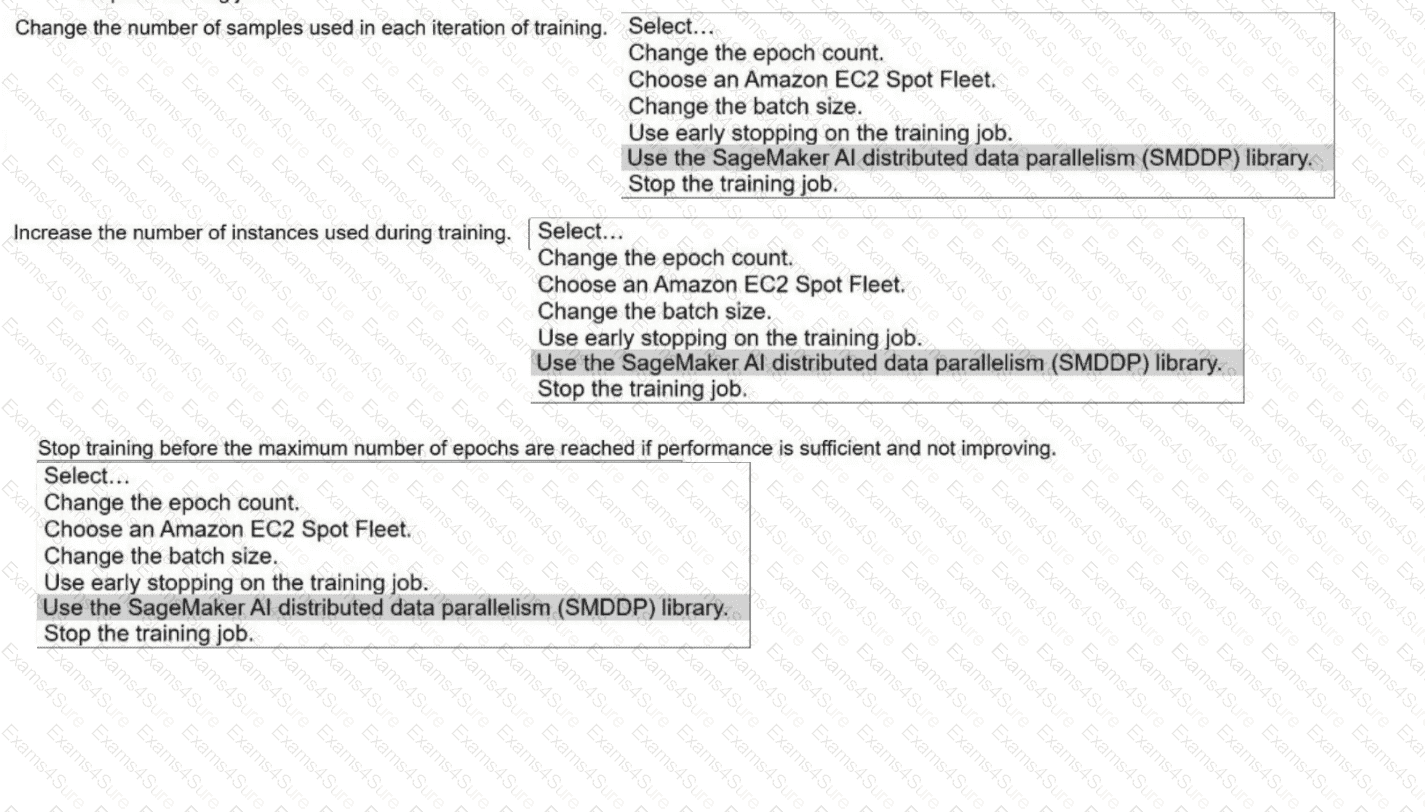

Select the correct solutions from the following list to meet the requirements for each description. Select each solution one time or not at all. (Select THRE

E.

). Change the epoch count.

. Choose an Amazon EC2 Spot Fleet.

· Change the batch size.

. Use early stopping on the training job.

· Use the SageMaker Al distributed data parallelism (SMDDP) library.

. Stop the training job.

An ML model is deployed in production. The model has performed well and has met its metric thresholds for months.

An ML engineer who is monitoring the model observes a sudden degradation. The performance metrics of the model are now below the thresholds.

What could be the cause of the performance degradation?

An ML engineering team has a data processing pipeline that ingests sensor data from IoT devices into an Amazon S3 bucket. The pipeline then processes the data by using AWS Glue extract, transform, and load (ETL) jobs for ML modeling. The team noticed throttling errors in the ETL jobs. The data ingestion process has also been slower than normal.

What is the cause of the problem?

An ML engineer is using an Amazon SageMaker AI shadow test to evaluate a new model that is hosted on a SageMaker AI endpoint. The shadow test requires significant GPU resources for high performance. The production variant currently runs on a less powerful instance type.

The ML engineer needs to configure the shadow test to use a higher performance instance type for a shadow variant. The solution must not affect the instance type of the production variant.

Which solution will meet these requirements?

A company launches a feature that predicts home prices. An ML engineer trained a regression model using the SageMaker AI XGBoost algorithm. The model performs well on training data but underperforms on real-world validation data.

Which solution will improve the validation score with the LEAST implementation effort?

An ML engineer has trained a neural network by using stochastic gradient descent (SGD). The neural network performs poorly on the test set. The values for training loss and validation loss remain high and show an oscillating pattern. The values decrease for a few epochs and then increase for a few epochs before repeating the same cycle.

What should the ML engineer do to improve the training process?

Case Study

A company is building a web-based AI application by using Amazon SageMaker. The application will provide the following capabilities and features: ML experimentation, training, a

central model registry, model deployment, and model monitoring.

The application must ensure secure and isolated use of training data during the ML lifecycle. The training data is stored in Amazon S3.

The company must implement a manual approval-based workflow to ensure that only approved models can be deployed to production endpoints.

Which solution will meet this requirement?

A company has a custom extract, transform, and load (ETL) process that runs on premises. The ETL process is written in the R language and runs for an average of 6 hours. The company wants to migrate the process to run on AWS.

Which solution will meet these requirements?